Commentary

How Two Similar School Districts Get Such Different Results

Study suggests funding levels have little impact on student growth

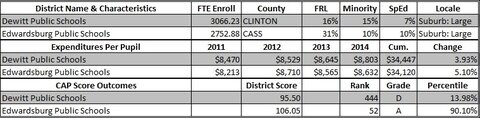

On the surface, DeWitt Public Schools just north of Lansing and Edwardsburg Public Schools on the Indiana border look very similar. They each serve about 3,000 students in predominantly white communities with poverty rates at or below the state average, though DeWitt’s median income level and rate of college-educated residents are noticeably higher.

Each district — DeWitt in Clinton County and Edwardsburg in Cass County — operates one comprehensive high school, one middle school, and three elementary campuses. They each sport Division 3 football teams that were 2015 playoff contenders.

But when it comes to the results their students demonstrate on state tests, the comparison diverges. Edwardsburg students outperformed their DeWitt counterparts on 14 of the 20 M-STEP tests administered last year to students in grades 3 through 11, despite serving more kids with special needs and a Free and Reduced Lunch (FRL) rate that’s nearly twice as high.

The same trend is observed on the Mackinac Center’s Context and Performance Report Cards. As a general rule, students from low-income households score lower on tests than other students, which may skew comparisons between a wealthy district and a less well-off one. To avoid the trap of judging the schools based on the economic status of the students they enroll, as the state’s Top-to-Bottom rankings do, the report cards adjust multiple years of testing data according to the rate of free lunch-eligible students served. A “CAP score” of 100 indicates a school meets expectations based on its student population, with higher scores indicating a school that beats the odds.

A combined look at the 2014 high school report card and the newer elementary and middle school edition reveals that Edwardsburg’s lowest school CAP score (101.81) beat the best showing from any of DeWitt’s schools (99.68). Averaging the school results into a district-level CAP score, Edwardsburg rates more than a full 10 points higher (106.05 vs. 95.50), finishing in the top 10 percent of all Michigan school districts. DeWitt’s combined district CAP score ranks in the bottom 14 percent statewide.

Money doesn’t explain the disparity in performance. Current expenditure data from the U.S. Census Bureau, over the same four-year period as the test results measured in Mackinac’s CAP scores, show the two districts spending nearly the exact same amount per student.

The coming weeks are scheduled to bring the release of Michigan’s taxpayer-funded adequacy study, a $399,000 project almost certain to tell us that the state needs to increase education funding. Official documents for the project indicate the recommendation will be based on the funding levels of “exemplary districts” that exceed proficiency averages on the 2014 Michigan Merit Examination given to 11th-graders statewide.

Both DeWitt and Edwardsburg qualify as “exemplary” under this model, yet both also stand among the state’s lowest-spending districts. Edwardsburg performs significantly better given the odds brought by its student body but continues to spend slightly less: $8,811 per pupil in 2014-15, compared to DeWitt’s $9,136.

This isolated district comparison may raise eyebrows about the adequacy study’s approach. But it’s the new Mackinac Center report, “School Spending and Student Achievement in Michigan: What's the Relationship?”, which clearly undercuts the assumption that more money will improve results statewide.

The report put extensive financial and academic data from more than 4,000 individual public schools through a careful analysis. It included 28 different academic indicators — test scores and graduation rates. Increased spending registered a statistically significant relationship with only one of the 28 indicators. That outlier wasn’t exactly impressive: a 10 percent increase in spending projects to raise the average seventh-grade math score by a mere fraction of a point.

The basis for taxpayers’ skepticism regarding the effectiveness of future funding increases lies deeper than the case of DeWitt and Edwardsburg. A closer look at Michigan’s numbers justifies the need to focus instead on how current dollars are being used.

|

How Two Similar School Districts Get Such Different Results

Study suggests funding levels have little impact on student growth

On the surface, DeWitt Public Schools just north of Lansing and Edwardsburg Public Schools on the Indiana border look very similar. They each serve about 3,000 students in predominantly white communities with poverty rates at or below the state average, though DeWitt’s median income level and rate of college-educated residents are noticeably higher.

Each district — DeWitt in Clinton County and Edwardsburg in Cass County — operates one comprehensive high school, one middle school, and three elementary campuses. They each sport Division 3 football teams that were 2015 playoff contenders.

But when it comes to the results their students demonstrate on state tests, the comparison diverges. Edwardsburg students outperformed their DeWitt counterparts on 14 of the 20 M-STEP tests administered last year to students in grades 3 through 11, despite serving more kids with special needs and a Free and Reduced Lunch (FRL) rate that’s nearly twice as high.

The same trend is observed on the Mackinac Center’s Context and Performance Report Cards. As a general rule, students from low-income households score lower on tests than other students, which may skew comparisons between a wealthy district and a less well-off one. To avoid the trap of judging the schools based on the economic status of the students they enroll, as the state’s Top-to-Bottom rankings do, the report cards adjust multiple years of testing data according to the rate of free lunch-eligible students served. A “CAP score” of 100 indicates a school meets expectations based on its student population, with higher scores indicating a school that beats the odds.

A combined look at the 2014 high school report card and the newer elementary and middle school edition reveals that Edwardsburg’s lowest school CAP score (101.81) beat the best showing from any of DeWitt’s schools (99.68). Averaging the school results into a district-level CAP score, Edwardsburg rates more than a full 10 points higher (106.05 vs. 95.50), finishing in the top 10 percent of all Michigan school districts. DeWitt’s combined district CAP score ranks in the bottom 14 percent statewide.

Money doesn’t explain the disparity in performance. Current expenditure data from the U.S. Census Bureau, over the same four-year period as the test results measured in Mackinac’s CAP scores, show the two districts spending nearly the exact same amount per student.

The coming weeks are scheduled to bring the release of Michigan’s taxpayer-funded adequacy study, a $399,000 project almost certain to tell us that the state needs to increase education funding. Official documents for the project indicate the recommendation will be based on the funding levels of “exemplary districts” that exceed proficiency averages on the 2014 Michigan Merit Examination given to 11th-graders statewide.

Both DeWitt and Edwardsburg qualify as “exemplary” under this model, yet both also stand among the state’s lowest-spending districts. Edwardsburg performs significantly better given the odds brought by its student body but continues to spend slightly less: $8,811 per pupil in 2014-15, compared to DeWitt’s $9,136.

This isolated district comparison may raise eyebrows about the adequacy study’s approach. But it’s the new Mackinac Center report, “School Spending and Student Achievement in Michigan: What's the Relationship?”, which clearly undercuts the assumption that more money will improve results statewide.

The report put extensive financial and academic data from more than 4,000 individual public schools through a careful analysis. It included 28 different academic indicators — test scores and graduation rates. Increased spending registered a statistically significant relationship with only one of the 28 indicators. That outlier wasn’t exactly impressive: a 10 percent increase in spending projects to raise the average seventh-grade math score by a mere fraction of a point.

The basis for taxpayers’ skepticism regarding the effectiveness of future funding increases lies deeper than the case of DeWitt and Edwardsburg. A closer look at Michigan’s numbers justifies the need to focus instead on how current dollars are being used.

Michigan Capitol Confidential is the news source produced by the Mackinac Center for Public Policy. Michigan Capitol Confidential reports with a free-market news perspective.